Healthcare interoperability makes it easier for clinics, hospitals, and private doctor’s offices to exchange patient information freely. Unfortunately, security risks increase as systems become more connected, making it hard to conform to federal and state government regulations.

How Healthcare Interoperability Could Cause a Security Risk

Interoperability in EHR (electronic health records) benefits both the patient and healthcare facility, but you’ll need to protect your data from hackers if you want to put interoperability to good use.

1. Hackers Gain Access to a Lot of Data

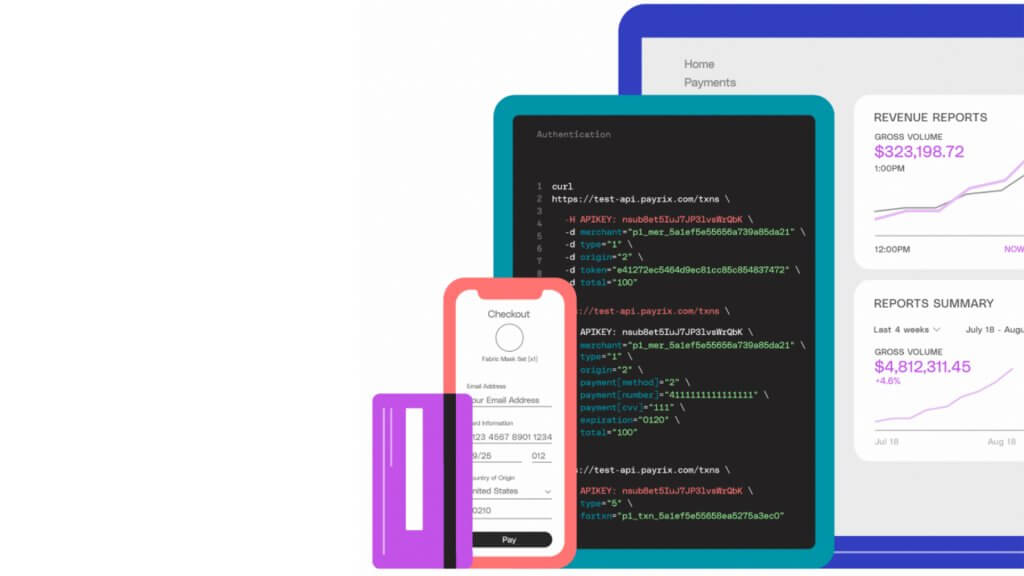

Healthcare interoperability can’t exist without APIs (application programming interfaces), which is both a blessing and a curse. APIs have a closed IT system and soloed data stores that manage the flow of information effortlessly and typically automatically between two or more points.

However, APIs handle a lot of data. If the system gets hacked, the culprit is privy to information they otherwise wouldn’t have access to if they stole a single file or document. APIs may open the floodgates to a total data breach, which could compromise the lives of millions of sick patients.

2. Violating HIPAA Privacy Regulations

The healthcare industry has adopted several technology solutions to secure and expand its business model. While managed APIs are considered very secure, any unauthorized access would violate HIPAA privacy regulations, which could cause fines or a complete shutdown.

Even if a healthcare provider does everything it can to secure its network, it can’t control what the patient does. Some patients may share their healthcare data with a third party and expose themselves to a data breach. If the provider can’t prove the patient is at fault, they’ll be charged.

3. Lack of Privacy and/or Security Policy

Healthcare organizations must establish privacy and security policies that stay consistent with the PMI privacy and security principles to assess any risk that could occur. Organizations have to assume that a hack could happen at any time if they want to ensure their patient’s safety.

With a policy in place, IT staff will know what to do when a breach occurs. Staff members need to know how to react to a breach, how to avoid scams, and who should and shouldn’t have access to data. If some staff work remotely, dictate who can access your systems from home.

4. Missing Encryption or Staff Authorization

Before organizations integrate their systems, they’ll need to evaluate their service provider’s infrastructure, its technical capabilities, and security practices. It should be protected using Transport Layer Security v. 1.27 or higher and/or with AES to protect data while it’s in transit.

The system itself also needs to verify the users\’ information before granting access and validate user ID when someone wants to issue credentials to a third party. Every action should be tied to a known ID, IP, or password, so any breach can be traced back to a person, device, or system.

5. No Alarm System When a Breach Occurs

Unless a security breach results in a shutdown, you may not even know it happened. Even If you tied specific inputs to something you can trace, that won’t prevent more data from leaking out of the system. You’ll need to set up an alarm that triggers when your system undergoes change.

Or, you could code the system to send a notification when any known change occurs, even if it isn’t malicious. Your IT staff won’t be able to check everything, but it will give them a breadcrumb trail that points to potentially malicious behaviour. To save time, focus on unauthorized alterations.